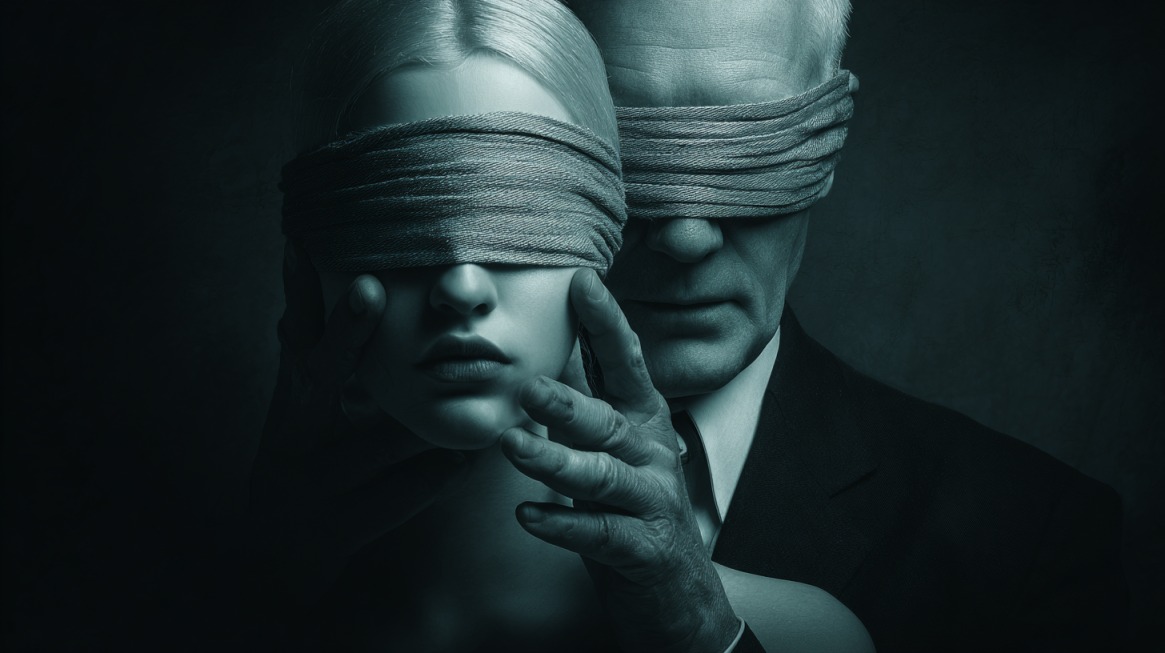

Online grooming involves deliberate manipulation aimed at children and teens through digital communication.

Abusers establish emotional dependence, normalize secrecy, and progress toward sexual exploitation, sextortion, or trafficking.

Rapid growth across social platforms has accelerated risk exposure at an unprecedented scale.

During the first half of 2025, NCMEC logged over 518,000 reports tied to online enticement, reflecting an 80% year over year rise.

Abuse connected to generative AI surged dramatically, increasing to more than 440,000 cases in early 2025 after only 6,835 reports during the same period in 2024.

Such figures confirm a sharp escalation in both threat volume and technical sophistication.

| Phase | Tactics | Impact | Data |

|---|---|---|---|

| Contact | Flattery, shared interests, validation | Fast trust, lowered suspicion | Offender positioned as sole support |

| Desensitization | Sexual jokes, gradual requests | Normalized violations | Sexual talk framed as curiosity |

| Isolation | Secrecy, private messages | Dependence, silence | Chat deletion, secrecy pressure |

| Sextortion | Threats, money demands | Fear, compliance | 23,593 cases in early 2025 |

| Sadistic | Humiliation, self injury | Severe trauma | 1,093 cases in early 2025 |

| Vulnerability | Targeted empathy | Emotional control | Risk tied to isolation and trauma |

Grooming Tactics and Psychological Manipulation

Online grooming relies on progressive behavioral conditioning rather than sudden escalation.

Offenders apply psychological pressure in calculated phases, adjusting tone and intensity based on a child’s reactions.

Control increases as emotional reliance grows, making resistance and disclosure increasingly difficult.

Initial Contact and Trust Building

First interactions appear harmless and socially familiar. Compliments, humor, shared interests, and attentive listening create a quick rapport. Conversations often mirror peer dynamics, reducing suspicion and lowering defensive barriers.

Emotional reassurance becomes a primary tool during periods of stress, family conflict, or social isolation.

- excessive praise tied to appearance or maturity

- validation of frustrations involving parents, teachers, or peers

- positioning of the offender as the only person who truly listens

Reliance on consistent communication reinforces perceived safety and loyalty.

Desensitization

Gradual normalization of sexual language marks a critical transition. Tone shifts slowly, beginning with suggestive jokes or questions framed as curiosity. Sexual references appear intertwined with affection, humor, or claims of closeness.

Requests escalate carefully. Visual content often starts non-explicit before progressing toward sexual images or videos. Repeated exposure reduces shock response and reframes boundary violations as normal interaction rather than abuse.

Isolation

Secrecy functions as a control mechanism. Groomers encourage private conversations and discourage disclosure, often portraying adults as intrusive or untrustworthy.

Movement toward encrypted or disappearing message platforms reduces oversight and increases dependency.

- discouraging screenshots or message sharing

- urging deletion of chat histories

- framing secrecy as proof of maturity, trust, or love

Social withdrawal limits access to protective relationships.

Coercion and Sextortion

Threats emerge once compromising material exists. Demands escalate rapidly and often include additional explicit content, money, or continued interaction. Exposure threats leverage shame and fear to maintain compliance.

- 23,593 financial sextortion reports within six months

- Prior count of 13,842 during 2024

Boys experience disproportionate targeting in financially motivated schemes, often tied to gaming platforms and social media messaging.

Sadistic Grooming

Some networks pursue control through humiliation and physical harm. Victims receive pressure to engage in self-injury during livestreams or recorded sessions. Acts become framed as demonstrations of devotion, loyalty, or proof of affection.

- 1,093 sadistic online enticement cases during early 2025

- 508 reports during the comparable prior period

- Psychological harm in such cases often exceeds immediate physical injury.

Vulnerability Targeting

Personal challenges increase susceptibility. Loneliness, low self-esteem, neurodivergence, family instability, and prior trauma attract deliberate attention. Emotional dependence grows through targeted reassurance and selective empathy.

Control strengthens as victims internalize guilt, responsibility, or fear of consequences tied to disclosure.

How Groomers Reach Children on Social Media

View this post on Instagram

Digital environments used daily by children create repeated points of contact that feel ordinary and unthreatening. Outreach rarely appears aggressive or overt. Casual interaction hides harmful intent, allowing exploiters to integrate slowly into a child’s online routine without raising immediate concern.

It goes without saying that parents should be aware of the measures of precaution they can undertake to prevent these problems from occurring.

Initial contact often mirrors common peer behavior. Comments, likes, reactions, and short messages serve as low-risk entry methods. Familiarity grows through frequency rather than intensity, reducing suspicion over time.

Platforms most often used share features that favor rapid connection and private communication. Common access points include Instagram, TikTok, Snapchat, Discord, Roblox, and multiplayer gaming chats.

- replies to public posts or stories

- direct messages referencing shared interests

- friend requests tied to mutual connections

- in game chat during cooperative or competitive play

Gaming environments present added risk due to voice chat, team dynamics, and prolonged unsupervised interaction.

Identity deception supports long-term manipulation. Fake accounts rely on stolen photos, invented backstories, and altered ages to appear credible. Trust builds as profiles reflect relatable hobbies, music tastes, or school-related topics.

Generative AI now allows offenders to maintain believable childlike personas capable of extended conversation without live human input, increasing scale and persistence.

Automated interaction enables continuous engagement across time zones and platforms. Consistency reinforces perceived authenticity and emotional availability.

Regulatory attention within the European Union increasingly focuses on platform responsibility. Accountability efforts address systemic risks linked to algorithmic promotion, private messaging architecture, and limited protections designed specifically for minors.

Policy direction reflects recognition that design choices influence exposure, access, and concealment of abuse.

AI, Deepfakes, and Emerging Grooming Enhancers

Artificial intelligence significantly expands offender reach, speed, and persistence. Digital tools reduce the effort required to target large numbers of children while increasing realism and emotional manipulation.

Abuse no longer depends on constant human interaction, allowing exploitation to scale rapidly across platforms and time zones.

Image generation tools enable the creation of explicit deepfakes using publicly accessible photos gathered via social profiles, school websites, and online directories. Fabricated material often appears indistinguishable to victims, intensifying fear and compliance.

Chat-based AI systems simulate age-appropriate conversation, humor, and emotional responses, creating the illusion of a peer relationship maintained continuously.

- generation of sexualized images tied to a real child’s likeness

- automated sexual conversation designed to mimic adolescent behavior

- rapid adaptation of language based on victim responses

Automation also accelerates psychological manipulation. Scripted guidance provides offenders with prompts that outline pacing, escalation timing, and response strategies. Resistance becomes easier to manage through predefined tactics that reframe hesitation as betrayal, fear, or lack of trust.

Operational advantages increase further through coordination. AI-assisted tools support simultaneous engagement with multiple victims, maintaining consistency and emotional availability that reinforces dependence.

Offline harm remains severe, particularly within school communities. So-called prank deepfakes frequently target girls, leading to harassment, reputational damage, social exclusion, and long-term psychological trauma.

Circulation within peer groups amplifies harm and complicates recovery.

European Union regulatory efforts increasingly address the misuse of AI-generated sexual material. Policy attention reflects recognition of escalating threat severity tied to synthetic media, automation, and insufficient safeguards protecting minors.

Trends and Statistical Evidence 2025 to 2026

CyberTipline data covering January through June 2025 reveals dramatic increases across multiple categories. Growth reflects both expanded detection and genuine escalation in offender activity.

- 518,720 online enticement reports after 292,951 previously

- 440,419 generative AI-related abuse reports following 6,835 earlier cases

- 23,593 financial sextortion incidents

- 62,891 child sex trafficking reports after 5,976 previously

- 1,093 sadistic online enticement cases

Expanded reporting requirements under the U.S. REPORT Act contributed to improved visibility. Data trends indicate rising scale, increased technical sophistication, and broader victim targeting rather than reporting artifacts alone.

View this post on Instagram

Summary

Grooming activity in 2026 relies heavily on advanced technology combined with psychological manipulation.

Artificial intelligence, deepfakes, and encrypted communication amplify harm and complicate intervention.

Coordinated action across technology companies, lawmakers, educators, caregivers, and child protection organizations remains essential to counter rapidly advancing exploitation tactics and reduce harm to children worldwide.